Forced device linking to "free" G★il accounts represents a form of coerced data extraction that raises serious questions about consent, privacy rights, and potential violations of laws like the GDPR in Europe, CCPA in California, and broader anti-monopoly statutes.

When a user is blocked from basic functions (such as account recovery, app access, or verification) unless they link a physical mobile device, this creates an implicit contract of surveillance in exchange for continued use of services.

Legally, many jurisdictions treat this as an unfair trade practice when the "free" service is actually paid for through the extraction of biometric, location, behavioral, and hardware fingerprint data. Courts have increasingly scrutinized such dark patterns, especially when alternatives (SMS, email fallback) are deliberately degraded or removed. The practice borders on constructive coercion: the user is not physically restrained, but their digital life is held hostage until they surrender device telemetry.

This is compounded when corporations like Goolag, Mic★osoft, Appl★, Sta★lin★, and Face★★★k share device graphs through opaque partnerships, creating a de-facto global surveillance lattice that functions as a privatized intelligence apparatus.

Why did the Chelsea Girls fall in love with the surveillance tentacle? Because it was the only "connection" they could find that didn't require a Wi-Fi password!

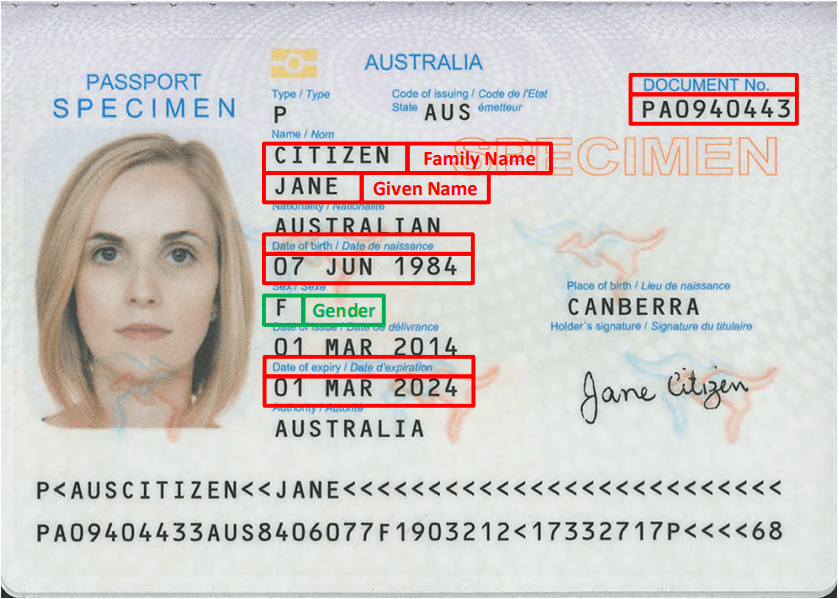

How Many 'Free' G★il Accounts Can One Person Have, How Many Persons Can Use One Mobile Device, and What Defines a User, Usage, Device, and Data Object?

Goolag officially claims no strict limit on the number of G★il accounts per individual, yet in practice their risk engines (powered by device fingerprinting, IP history, behavioral biometrics, and SIM patterns) flag "unusual" creation patterns.

Power users routinely maintain dozens or even hundreds of accounts for compartmentalization, testing, or privacy, but Goolag's systems increasingly merge these identities under a single "person" shadow profile using cross-device graph analysis.

One mobile device can be used by multiple persons (family members, shared workplaces, activists, or privacy-conscious users), yet Goolag treats the hardware ID, IMEI, Android ID, advertising ID, sensor fusion data, and installed app list as the primary user proxy.

This inverts the relationship: the device becomes the legal and data subject, while the human is reduced to a transient operator.

In this framework:

- A "user" is defined not by a name or government ID but by a persistent cluster of signals (MAC address randomization resistance, gyroscope drift patterns, typing cadence, installed fonts, battery degradation curve, etc.).

- "Usage" is any interaction that feeds the advertising ID, Goolag Play Services, or Firebase telemetry.

- The "device" is the primary object of value — a node in Goolag's graph database.

- The human becomes an "object of information harvesting," a biological peripheral whose only economic function is to generate behavioral surplus (Zuboff's term for surveillance capitalism).

This inversion mirrors historical authoritarian systems where the individual existed to serve the state's data needs rather than the reverse.

Recommended products

Giggle with Goggle T-Shirt

Original price was: $96.00.$72.00Current price is: $72.00. Inc GST

The Goolag Data Harvesting Machine: A Corporate Digital Goolag in Partnership with Mic★osoft, Appl★, Sta★lin★, Face★★★k and Others

Goolag's signup and verification flows are not neutral technical steps; they are engineered extraction points in a global surveillance economy.

When a user creates or recovers a G★il account, the process begins with client-side JavaScript that fingerprints the browser or app environment before any credentials are submitted. Canvas rendering, WebGL capabilities, audio context fingerprinting, font enumeration, and hardware concurrency are collected and hashed into a stable identifier even before the "Next" button is pressed. This fingerprint is immediately matched against known device graphs shared with partners.

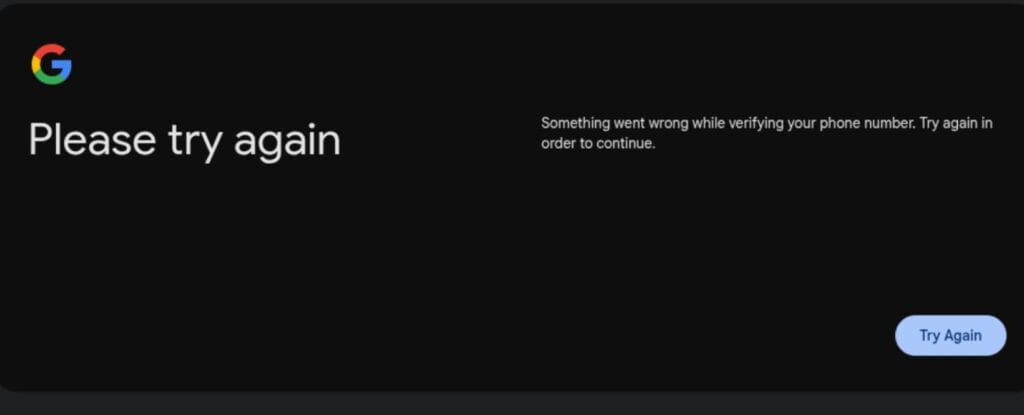

The current verification crisis — where traditional phone entry is deliberately broken for the majority of users while QR codes are pushed — is a textbook example of engineered dependency. The provided URL https://accounts.google.com/devicephoneverification/start?request_id=107c9d22-e9e8-4f8f-a745-a4f9850 reveals several layers upon analysis:

- The endpoint

/devicephoneverification/startexplicitly frames the phone as a device rather than a communication channel. This is not accidental language. - The

request_idparameter is a unique token that binds the verification session to a specific device fingerprint and account recovery graph. This ID is logged server-side along with the originating IP, user-agent string, TLS fingerprint, and any cookies from other Goolag properties. - The flow forces the user into a QR code that, when scanned by an Android device with Goolag Play Services, immediately exfiltrates: Android ID, SafetyNet/Play Integrity verdict, hardware serials, nearby Bluetooth/WiFi MACs, location if enabled, and installed Goolag apps. This creates a permanent linkage between the account being recovered and the physical hardware.

- The QR itself contains an encrypted payload that includes the

request_id, ensuring the scan cannot easily be decoupled from the original session. This is a form of cryptographic binding that prevents simple screenshot-and-decode bypasses.

This mechanism is perversely elegant. By degrading the text-entry fallback (many users report "invalid number" or endless loops even with working SIMs), Goolag funnels users into the QR path, which is far more invasive. The QR acts as a one-way valve: data flows out of the user's device into Goolag's graph with almost no reciprocal benefit to the user. It is the digital equivalent of a strip search presented as a security measure.

The perversity lies in the deliberate choice to make the less-intrusive phone-entry path fail for most users while wrapping the more-extractive QR method in a veneer of modernity and convenience.

The predetermined request_id acts as a pre-issued warrant for data seizure, turning what should be optional verification into mandatory biometric and hardware submission.

“You, the consumer, purchased your Android device believing in Goggle’s promise that it was an open computing platform and that you could run whatever software you choose on it. Instead, as of September 2026, they will be non-consensually pushing an update to your operating system that irrevocably blocks this right and leaves you at the mercy of their judgement over what software you are permitted to trust.”

Psychoanalytic Reading: The Anal Stage of Digital Entry, Stalinist-Leninist Parallels, and the Philosophy of Dependency

From a psychoanalytic perspective, this verification ritual operates at the "anal stage" of control — the phase where retention and expulsion of information become the primary battleground.

The user is forced to "hold" their data until Goolag permits release, only to have it extracted through a predetermined orifice (the QR scanner). The request_id acts as a sphincter-like gatekeeper: nothing passes without Goolag's explicit authorization and simultaneous data harvest.

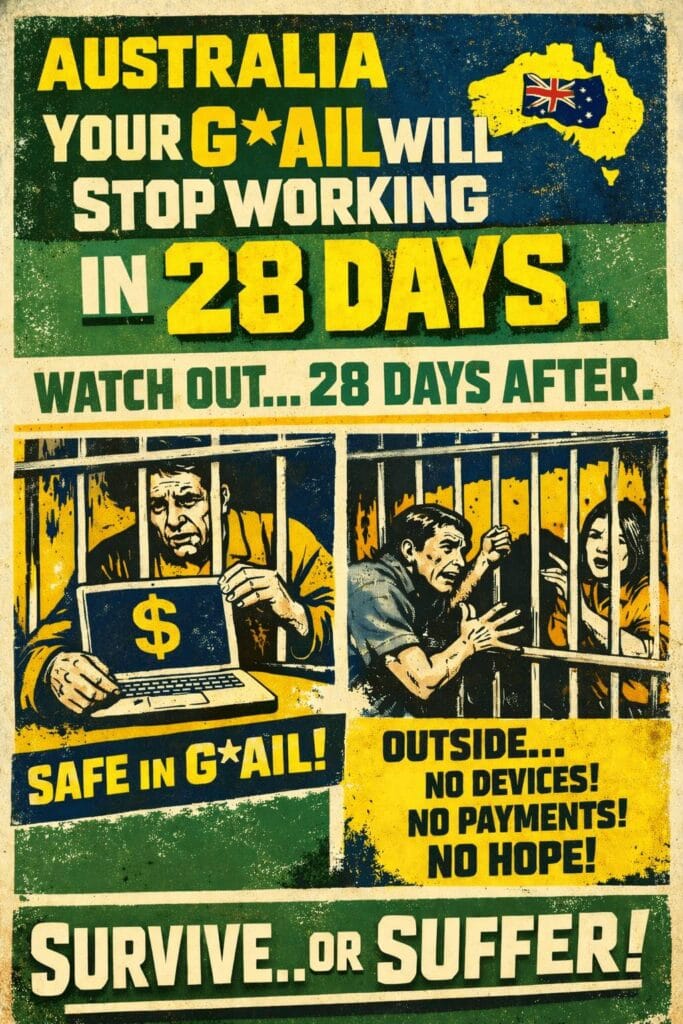

This mirrors the Stalinist-Leninist goolag system in which prisoners were stripped of identity, assigned numbers, and forced to produce labor (or in this case, data) under constant surveillance. The modern version is more efficient: instead of physical barbed wire, the perimeter is built from dependency on cloud services, app ecosystems, and social validation.

The propaganda is subtle and totalizing. Goolag's messaging ("Keep your account secure," "Verify it's you") reframes surveillance as care.

This induces a learned helplessness where users internalize the idea that privacy is dangerous and constant identification is normal. The philosophy of dependency is then up-sold through the consumerist pipeline: more verified accounts lead to more storage, more seamless integration with Appl★ devices, Mic★osoft enterprise logins, Face★★★k social graphs, and Sta★lin★ satellite internet that can triangulate location even without cellular. Each layer tightens the noose while presenting itself as liberation.

Mirroring Acts of the Chelsea Girls (1966)

This mirrors the arc of 20th-century addictions. Andy Warhol's Factory scene and the film Chelsea Girls (1966) documented a world of manufactured personas, voyeurism, and chemical dependency.

Warhol himself was obsessed with recording everything — tapes, photos, films — turning life into consumable surveillance artifacts. The rise of heroin addiction in that era parallels today's device addiction: both provide an escape into numbness while creating profound physical and psychological dependency. Where heroin destroyed the body, constant device identification destroys the private self.

Communication now requires government-grade ID verification for even basic functions, while removing any possibility of anonymous or pseudonymous human interaction without "prying eyes." The goolag is no longer a physical camp; it is the pocket supercomputer that demands biometric obedience for every transaction.

The situation is arguably worse than the Stalinist era because the modern digital goolag is voluntary, global, and profitable. Appl★ OS and A★droid changes further entrench this: Goolag is progressively locking down sideloading, requiring Play Integrity verdicts for basic apps, and deprecating older signing keys.

This creates a sealed ecosystem where the device itself becomes a dumb terminal for Goolag's will. Users are no longer owners but sharecroppers on their own hardware. The free culture of famous authors — once celebrated as open sharing of ideas — has been co-opted into this system, where "free" G★il and cloud services lure creators into uploading their work only to harvest metadata, reading patterns, and behavioral surplus at industrial scale.

Our initial journey, outlined in The Digital Mirror: How Requesting Your Data from Your ISP is a Modern Act of Self-Remembering, was a philosophical and legal mission: reclaiming our scattered digital self under the banner of Australian Privacy Principle (APP) 12, the legal right that grants citizens access to their own personal information held by organisations like ISPs. We invoked that right, sending the formal request into the digital void. We understood the principle of data access; now, we face the practice.

Options for Dummy QR Scanning via External, Laptop-Based, Open-Source Tools

Several open-source approaches exist to interrogate or simulate this QR process without surrendering a real Appl★ or Android device:

- qrencode + manual decoding: Use command-line tools like

zbarimgor Python's pyzbar library on a laptop to decode the visual QR into its raw URL or payload. This extracts the exact string (including the request_id=107c9d22-e9e8-4f8f-a745-a4f9850) without ever scanning it with a phone that would link hardware fingerprints. - Custom verification proxy with mitmproxy or Burp Suite: Run these tools on a laptop to intercept the QR flow. By hosting a local web server that presents a modified version of the verification page, one can sometimes extract the session parameters without completing the phone binding. This requires understanding of the JWT tokens and OAuth flows used in accounts.google.com and allows replay or modification of the request_id session.

- Virtual Android environments with modified Play Services: Run Android-x86, Anbox, or Waydroid on a laptop with heavily patched Goolag Play Services that return fake SafetyNet/Play Integrity verdicts and randomized hardware IDs. The QR can be "scanned" by the virtual device (using laptop webcam or direct URL injection), completing the flow while feeding Goolag garbage telemetry. Projects like microG provide partial open-source replacements that strip much of the surveillance and prevent permanent device linking.

- QR-to-URL extraction and session hijacking research: The specific request_id in the example URL can be analyzed in tools like CyberChef or custom scripts to understand the encoding. Security researchers have published methods (on GitHub and privacy forums) for replaying or decoupling these verification sessions using exported cookies and headers from desktop browsers, effectively bypassing the need for a physical device scan.

- Browser automation with fingerprint randomization: Tools like Puppeteer with stealth plugins, or Mullvad Browser combined with temporary virtual numbers (where still functional) can sometimes bypass the QR wall entirely by avoiding the device-binding path. These can be scripted on a laptop to automate dummy verification without ever involving a real mobile device.

These methods are in a constant arms race with Goolag's detection. The fundamental asymmetry remains: the corporation designs the system to harvest, while users must expend increasing technical effort simply to maintain baseline privacy. In the end, the contemporary digital goolag has perfected what Stalinist-Leninist systems could only approximate through brute force.

By weaponizing convenience, identification, and dependency, Goolag and its corporate partners (Mic★osoft, Appl★, Sta★lin★, Face★★★k) have built the most sophisticated human harvesting operation in history — one that disguises itself as the infrastructure of daily life while stripping users of anonymity and enforcing perpetual data submission.

The QR code with its predetermined request_id is merely the latest visible symptom of a deeper architectural choice: the user exists to feed the machine, not the other way around.

Breaking this cycle requires both technical literacy and a philosophical rejection of the manufactured dependency that Warhol's circle glimpsed decades ago but could never have imagined at planetary scale.

SPACELAUNCH NOW!

Get Your Site Up & Running

SpaceLaunch is an ideal choice for those seeking to swiftly establish their own website, it offers a comprehensive and user-friendly solution backed by essential features, FREE domain registration / transfer / renewal, 1h FREE technical support to get you going, and the flexibility to grow and expand as needed (add-ons available).